OpenAI rolled out on its DevDay an array of transformative updates and features [blog post, keynote recording]. Here’s a succinct rundown:

- Recap: ChatGPT release Nov 30, 2022 with GPT-3.5. GPT-4 release in March 2023. Voice input/output, vision input with GPT-4V, text-to-image with DALL-E 3, ChatGPT Enterprise with enterprise security, higher speed access, and longer context windows. 2M developers, 92% of Fortune 500 companies building products on top of GPT, 100M weekly active users.

- New GPT-4 Turbo: OpenAI’s most advanced AI model, 128K context window, knowledge up to April 2023. Reduced pricing: $0.01/1K input tokens (3x cheaper), $0.03/1K output tokens (2x cheaper). Improved function calling (multiple functions in single message, always return valid functions with JSON mode, improved accuracy on returning right function parameters). More deterministic model output via reproducible outputs beta. Access via

gpt-4-1106-preview, stable release pending. - GPT-3.5 Turbo Update: Enhanced

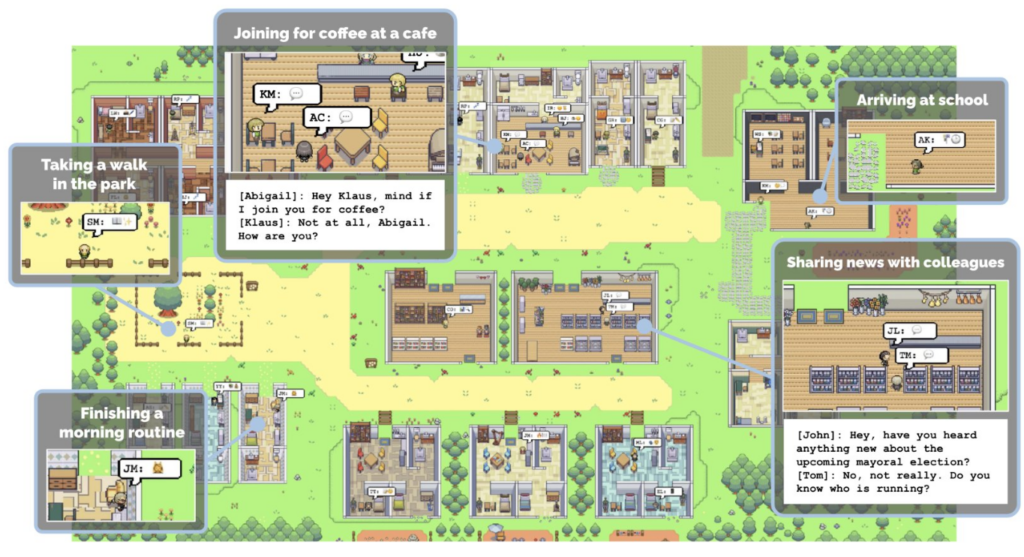

gpt-3.5-turbo-1106model with 16K default context. Lower pricing: $0.001/1K input, $0.002/1K output. Fine-tuning available, reduced token prices for fine-tuned usage (input token prices 75% cheaper to $0.003/1K, output token prices 62% cheaper to $0.006/1K). Improved function calling, reproducible outputs feature. - Assistants API: Beta release for creating AI agents in applications. Supports natural language processing, coding, planning, and more. Enables persistent Threads, includes Code Interpreter, Retrieval, Function Calling tools. Playground integration for no-code testing.

- Multimodal Capabilities: GPT-4 Turbo supports visual inputs in Chat Completions API via

gpt-4-vision-preview. Integration with DALL·E 3 for image generation via Image generation API. Text-to-speech (TTS) model with six voices introduced. - Customizable GPTs in ChatGPT: New feature called GPTs allowing integration of instructions, data, and capabilities. Enables calling developer-defined actions, control over user experience, streamlined plugin to action conversion. Documentation provided for developers.